In our digitally interconnected era, the cybersecurity focus has shifted to the critical integrity of software supply chains.

Software supply chain attacks exploit vulnerabilities in creating, distributing, or updating software, leveraging trust to compromise systems and lead to data breaches. These attacks take various forms, such as inserting malicious code into open-source libraries or compromising build environments.

With organizations relying more on third-party components and collaborative development, the attack surface has expanded. NIST certification serves as a testament to an organization’s commitment to maintaining robust security measures aligned with national standards.

This blog aims to simplify the complexities of software supply chain security and offers practical insights into implementing NIST recommendations to counter these evolving threats.

Understanding software supply chain attacks

Software supply chain attacks are sophisticated cyber threats that exploit vulnerabilities within the software development, distribution, or deployment processes. These attacks aim to compromise the integrity of software by injecting malicious code, compromising dependencies, or manipulating the build and update processes.

Organizations face susceptibility to software supply chain attacks primarily due to two factors:

- Privileged access: A significant number of software products necessitate elevated access levels for optimal performance. Customers often accept default access settings, providing an avenue for unauthorized invasions. Given that many software products have a widespread presence throughout the enterprise, vulnerabilities in unauthorized access can have a substantial impact on critical systems within the organization.

- Frequent communication: While software updates are essential, regular communication between vendors and customers creates a potential vulnerability. Hackers exploit this trusted communication channel by posing as vendors, disseminating fake updates embedded with malware, or obstructing genuine security updates from reaching the customer. Consequently, customers remain exposed to existing threats, highlighting the risks associated with the frequent exchange of software updates.

| Types of software supply chain attacks | Description |

| Tainted components | Attackers inject malicious code or backdoors into software components, including libraries and frameworks, often exploiting trust in widely used open-source projects. |

| Compromised build environments | Attackers target the environments where software is built, introducing malicious modifications to source code, build scripts, or build tools. |

| Distribution channel exploitation | Malicious actors compromise the channels through which software is distributed, allowing them to deliver tampered versions of software to end-users. |

Common vectors in software supply chain attacks

A “vector” refers to the method or pathway through which an attacker gains access to a system or network. Common vectors encompass the various entry points that adversaries exploit to compromise the integrity of the software development, distribution, or deployment processes.

In the context of software supply chain attacks, common vectors include:

- Third-party dependencies: Many software projects rely on third-party libraries and components. Attackers exploit vulnerabilities in these dependencies to compromise the overall software supply chain.

- Build toolchain compromises: Manipulating build tools and processes can introduce malicious code into the final software product. This could occur through compromised build servers or build scripts.

- Update mechanism exploitation: Attackers compromise the mechanisms used to update software, distributing malicious updates to users who unknowingly install compromised versions.

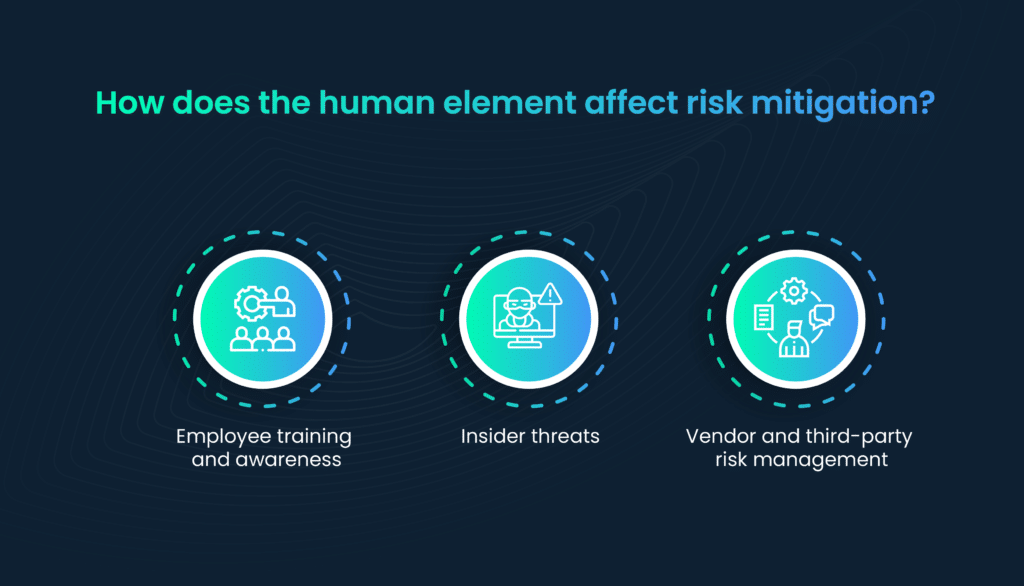

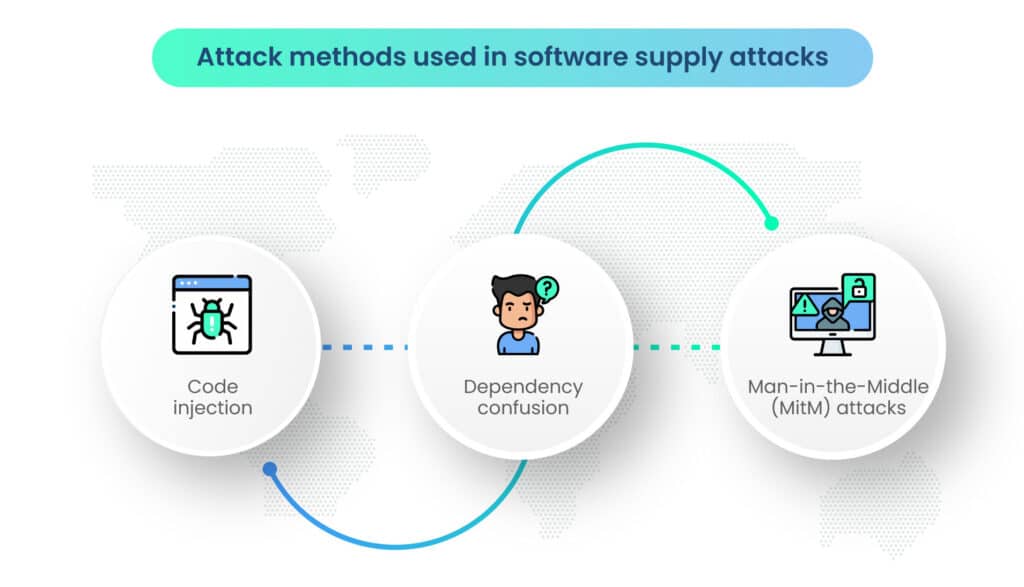

Attack techniques used in software supply attacks

Attack techniques are the specific methods and approaches that attackers use to carry out their malicious activities once they’ve gained access through a vector. These techniques involve manipulating systems, injecting malicious code, or taking advantage of vulnerabilities to achieve their objectives.

- Code injection: Malicious code is injected into legitimate software components, allowing attackers to gain unauthorized access, exfiltrate data, or conduct other malicious activities.

- Dependency confusion: Attackers upload malicious packages to public repositories with names similar to legitimate dependencies, tricking developers into unknowingly using the compromised components.

- Man-in-the-Middle (MitM) attacks: Intercepting communication between software components and servers to modify or replace legitimate data with malicious content during the download or update process.

Software supply chain attack examples include the SolarWinds incident, discovered in late 2020, which involved the compromise of the SolarWinds Orion software updates. Malicious actors inserted a backdoor into the updates, leading to the compromise of numerous high-profile organizations and government agencies.

NotPetya, a destructive malware variant, propagated through a compromised update of Ukrainian accounting software called MeDoc. The malware spread globally, causing widespread disruption and financial damage.

The importance of NIST recommendations

The National Institute of Standards and Technology (NIST), a cornerstone in the realm of cybersecurity standards and guidelines, recognizes the gravity of software supply chain threats.

Established by the U.S. government, NIST’s mission includes developing and promoting standards to enhance the security and resilience of critical information systems.

NIST recommendations stand as a formidable defense against software supply chain attacks, particularly in mitigating the risks associated with third-party data breaches and vendor-related incidents.

The framework underscores the importance of understanding the interconnected nature of the supply chain, with a focus on securing every link to prevent data breaches. By incorporating robust security measures throughout the Software Development Lifecycle (SDLC), NIST provides a proactive approach to counteracting the vulnerabilities that could lead to data breaches.

The guidelines extend to vendor and supplier management, where NIST recommends stringent risk assessments, audits, and the inclusion of security requirements in contractual agreements to fortify against potential data breaches originating from external partners.

By adhering to NIST’s guidance, following NIST password guidelines, implementing NIST controls, and integrating the NIST privacy framework, organizations can establish a robust security foundation, implementing measures to detect, prevent, and respond to potential supply chain compromises.

NIST’s influence extends beyond the national landscape, with its recommendations often serving as a global benchmark for cybersecurity best practices. The institute’s expertise in developing standards that balance security and usability makes its guidance invaluable for organizations seeking effective strategies to safeguard their software supply chains.

NIST compliance and other relevant standards

NIST guidelines serve as a benchmark for organizations aiming to achieve and maintain regulatory compliance in the realm of software supply chain security. Compliance with NIST standards not only enhances cybersecurity but also aligns organizations with industry best practices.

The NIST SP 800-161 document provides guidelines on supply chain risk management practices for federal information systems.

While not specific to supply chain security, the NIST cybersecurity framework outlines a set of core functions—Identify, Protect, Detect, Respond, and Recover—that can be applied to enhance overall cybersecurity, including supply chain considerations. NIST CSF V2.0 is expected to be released soon with enhanced security features.

Aligning with both NIST recommendations and industry regulations enhances the overall risk mitigation strategy, addressing a broad spectrum of potential threats.

Compliance with privacy regulations, such as GDPR or HIPAA, often intersects with supply chain security. NIST guidelines provide a foundation for addressing these considerations within the broader supply chain context.

Organizations should be aware of industry-specific standards that may impose additional requirements. NIST recommendations can often be tailored to meet these specific standards.

Beyond NIST standards, organizations must navigate a complex legal and compliance landscape. Understanding and adhering to relevant regulations is vital for avoiding legal ramifications and ensuring the security of the software supply chain.

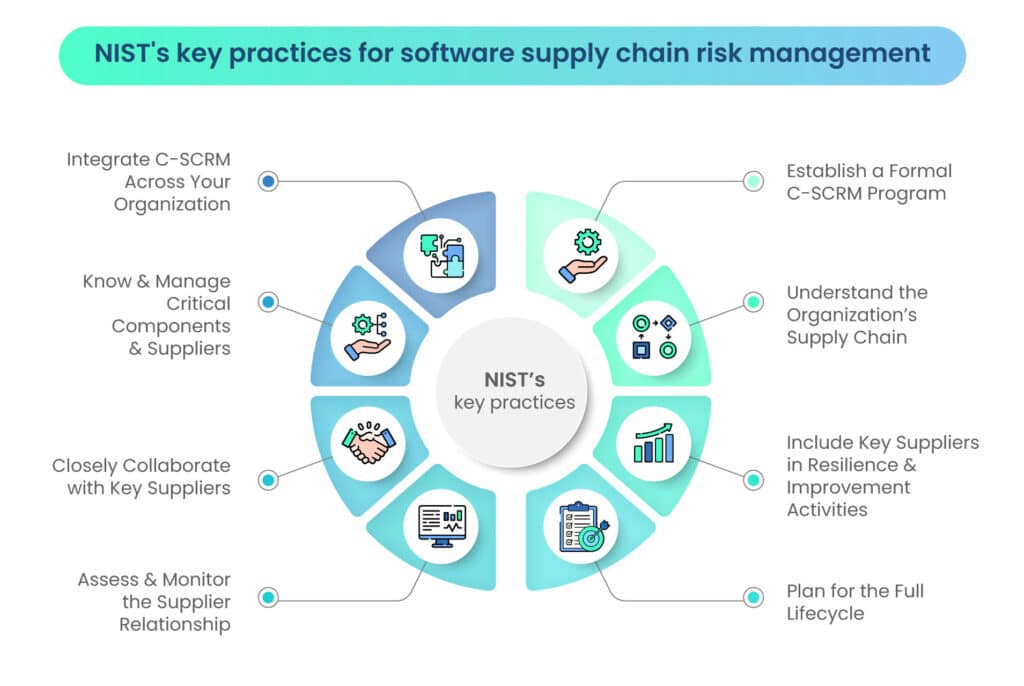

NIST’s eight key practices for comprehensive software supply chain risk management

Should your organization be prepared to adopt a C-SCRM (Cyber Supply Chain Risk Management)

approach aimed at preventing and mitigating software vulnerabilities while reducing overall risk, NIST suggests the following eight key practices:

- Integrate C-SCRM practices seamlessly across all facets of your organization.

- Establish a structured and formalized C-SCRM program to ensure systematic implementation.

- Gain a comprehensive understanding of critical components and suppliers, actively managing them to minimize risks.

- Develop a deep understanding of your organization’s supply chain, identifying potential vulnerabilities and areas of improvement.

- Cultivate strong collaboration with key suppliers to enhance communication and address potential vulnerabilities collectively.

- Engage key suppliers actively in activities focused on resilience and continuous improvement.

- Regularly assess and monitor the relationships with suppliers to ensure ongoing compliance with security standards and best practices.

- Develop comprehensive plans that consider the entire lifecycle of the software, addressing potential risks at every stage.

NIST cybersecurity framework for software supply chain risk management

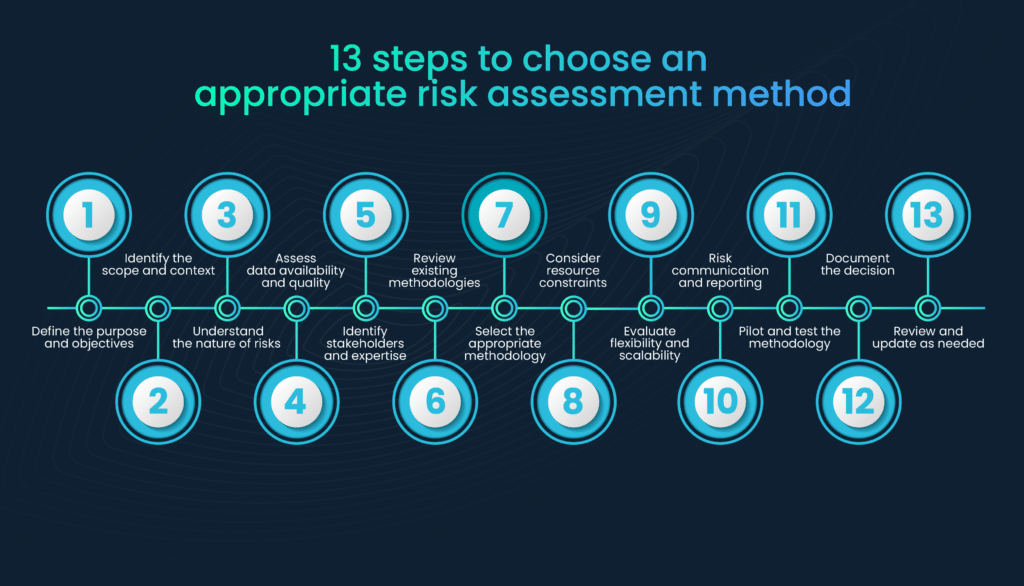

The NIST framework for supply chain risk management emphasizes the importance of identifying, assessing, and mitigating risks across the supply chain to safeguard critical assets and information.

NIST’s framework encourages organizations to adopt a risk management approach that aligns with their business goals. By integrating supply chain risk management into overall risk management practices, organizations can systematically address the unique challenges posed by software supply chain threats.

While the NIST framework provides a broad approach to supply chain risk management, organizations should tailor these guidelines to address the specific nuances of software supply chain security. This involves incorporating measures to secure the software development lifecycle, ensure code integrity, and establish robust vendor management practices.

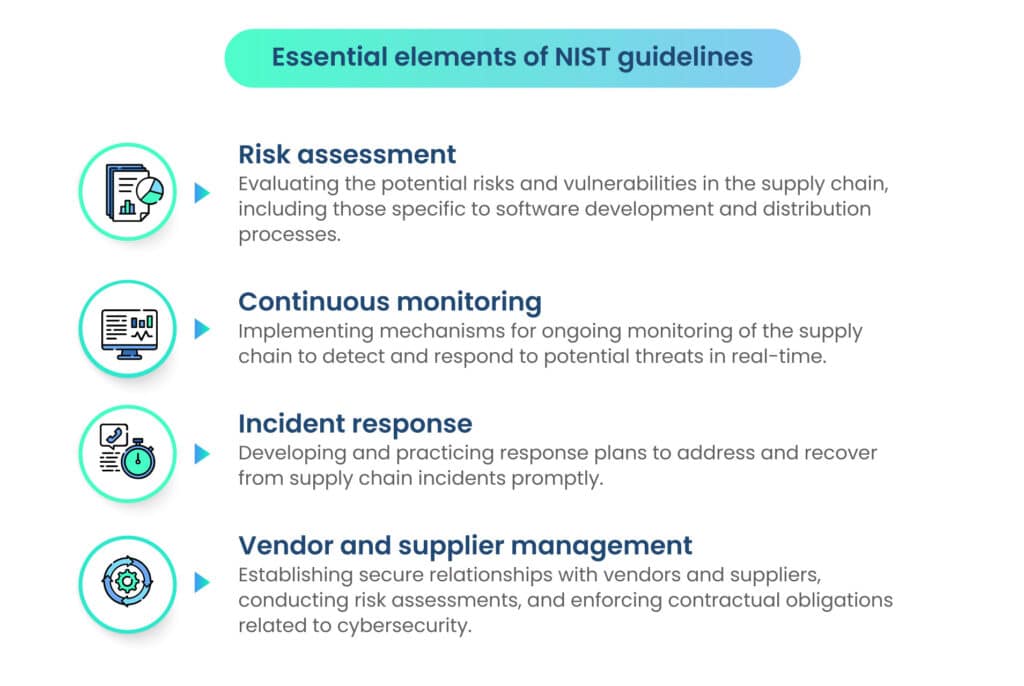

Key components of NIST recommendations

The NIST framework provides a structured approach to supply chain risk management, consisting of key components such as those outlined below:

A. Risk assessment and management

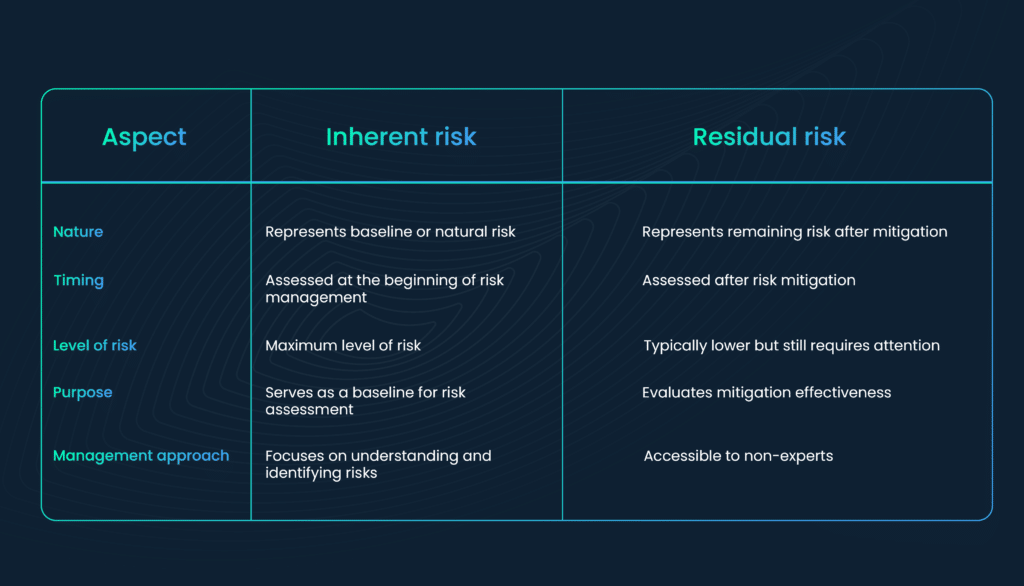

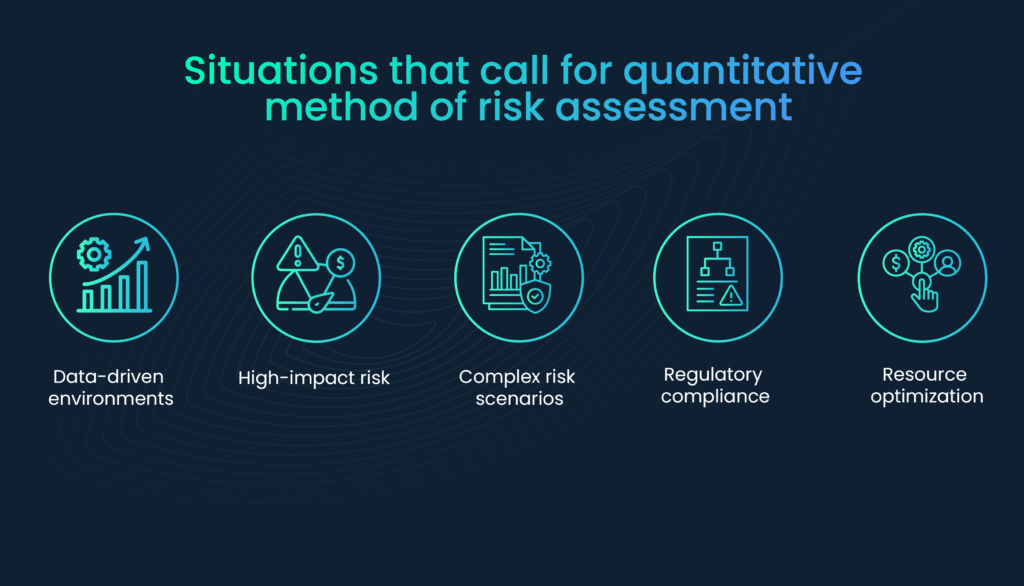

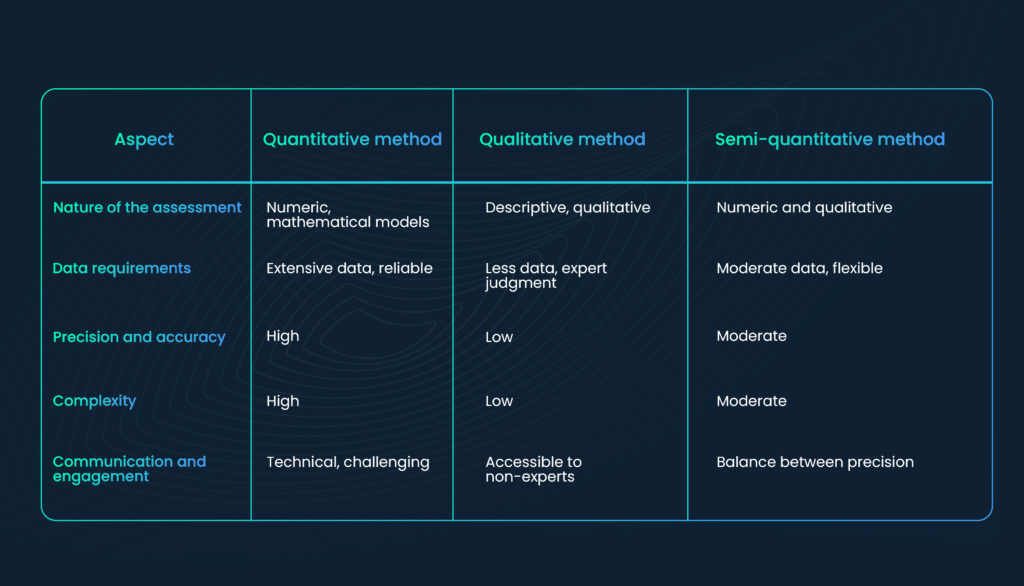

Risk assessment is a fundamental step in fortifying the software supply chain against potential threats. It involves identifying vulnerabilities, understanding their potential impact, and assessing the likelihood of exploitation.

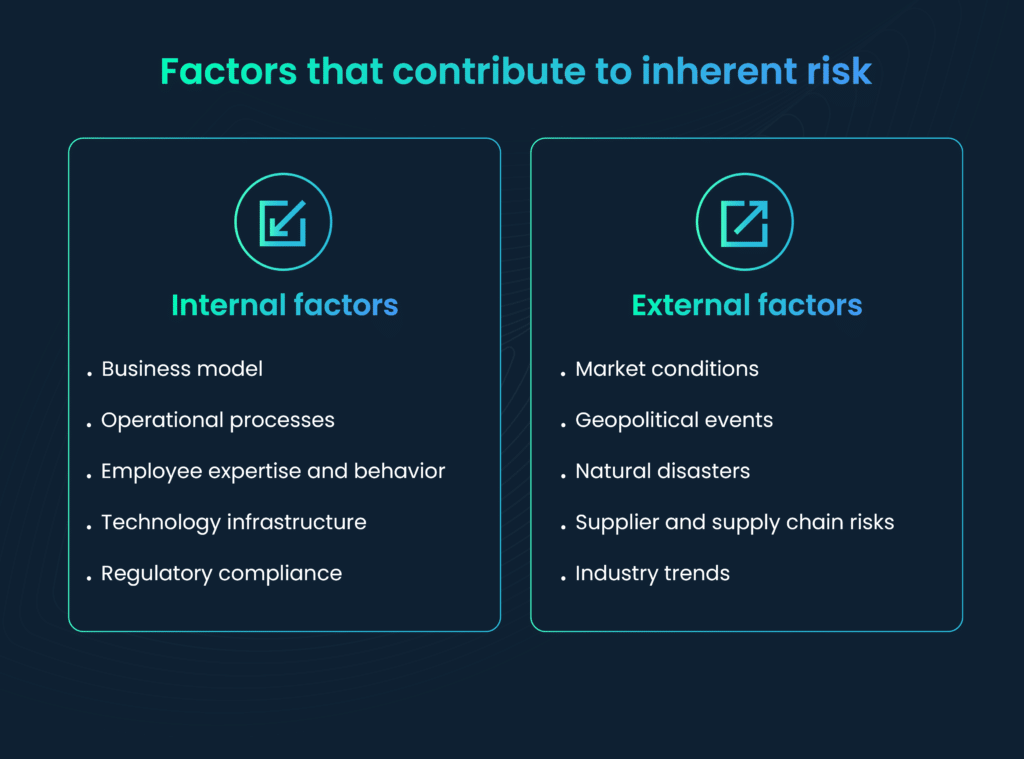

The inherent risk within supply chains amplifies significantly as a company opts to delegate more processes to software vendors. Every stage in the software development lifecycle—design, production, distribution, acquisition/deployment, maintenance, and disposal—brings its own set of risks.

Examples include the possibility of foreign parts arriving with embedded malware or the injection of malware during the design and production phases through a compromised build server. Distribution introduces the risk of new software becoming infected after leaving the factory. Moreover, threat actors often target the maintenance phase, utilizing routine-looking updates that conceal backdoor malware, posing a substantial threat to customers.

A successful risk assessment considers both technical and non-technical factors. This includes evaluating the security practices of vendors and suppliers, assessing the resilience of the organization’s infrastructure, and understanding the potential impact of a supply chain compromise on business operations.

Key components of supply chain risk assessment include:

- Dependency analysis: Examining dependencies within the software supply chain to identify potential points of compromise, ensuring that third-party libraries and components are secure and regularly updated.

- Build environment evaluation: Assessing the security of the build environment to detect and remediate vulnerabilities in build tools, scripts, and servers.

- Distribution channel security: Ensuring the integrity of distribution channels by implementing secure update mechanisms and protecting against man-in-the-middle attacks during software delivery.

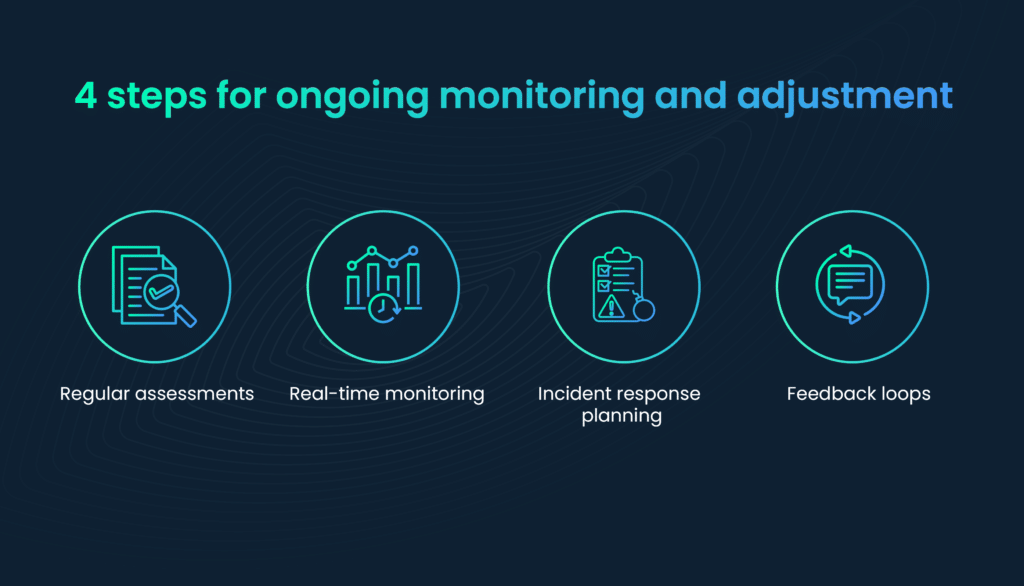

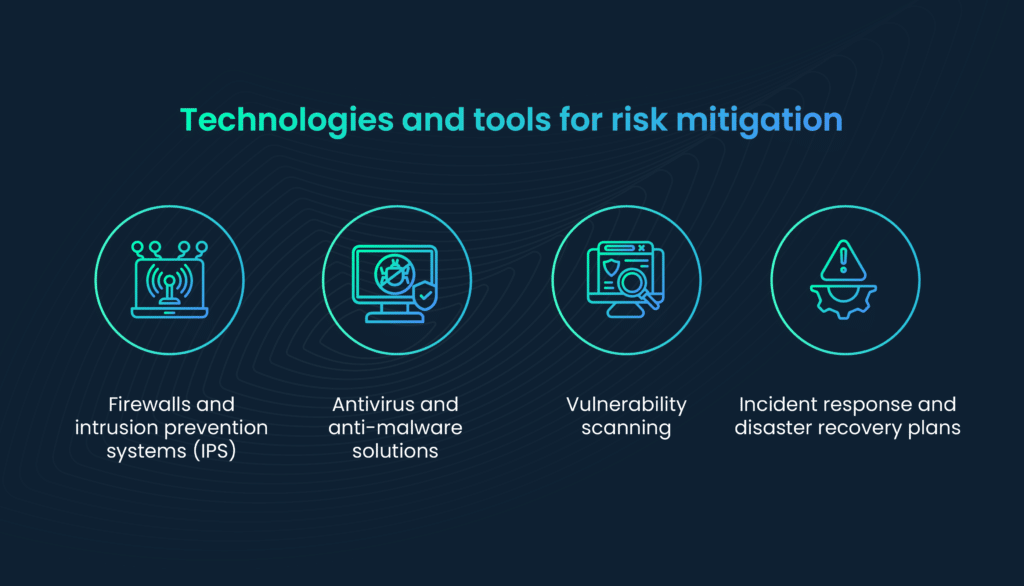

B. Continuous monitoring for risks and securing the Software Development Lifecycle (SDLC)

Supply chain risks evolve over time. Continuous monitoring is crucial to adapting to these changes and detecting new vulnerabilities promptly. Automated tools, threat intelligence feeds, and ongoing collaboration with vendors contribute to a proactive and adaptive risk management strategy.

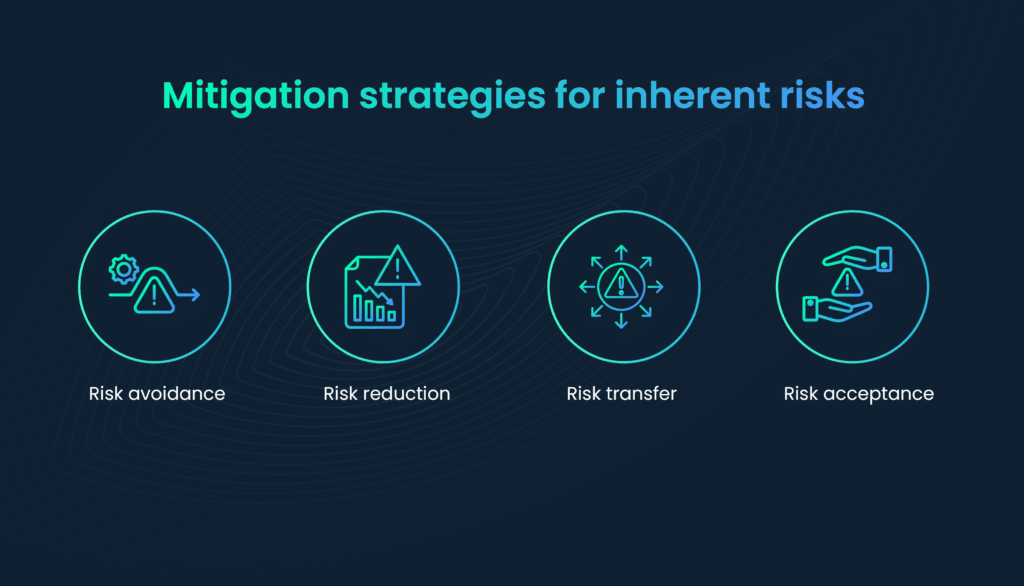

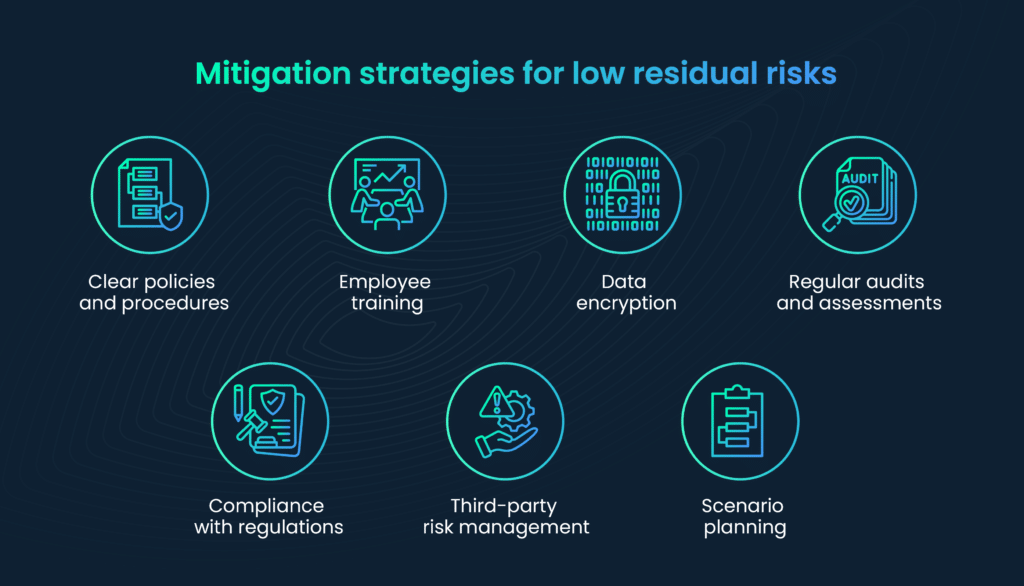

A risk mitigation strategy should prioritize addressing high-impact, high-likelihood risks first. This involves allocating resources efficiently to mitigate the most critical vulnerabilities within the software supply chain.

Collaborative efforts within the industry, such as sharing threat intelligence and best practices, contribute to a collective defense against common threats. Initiatives like the NIST National Cybersecurity Center of Excellence (NCCoE) encourage collaboration in addressing cybersecurity challenges.

SDLC is foundational to mitigating software supply chain risks. Embedding security practices throughout the development process helps identify and address vulnerabilities early, reducing the likelihood of introducing security flaws into the final product.

NIST recommends integrating security into each phase of the SDLC. This includes:

- Requirements analysis: Clearly defining security requirements and constraints.

- Design and architecture: Implementing secure design principles and conducting threat modeling.

- Coding and implementation: Following secure coding practices and conducting regular code reviews.

- Testing: Incorporating security testing, including static analysis, dynamic analysis, and penetration testing.

- Deployment: Ensuring secure deployment practices, including code signing and integrity checks.

NIST guidelines for code integrity and verification

Code integrity is paramount to software supply chain security. Verifying the authenticity and integrity of source code and binaries helps prevent the introduction of malicious components during the development and distribution processes.

NIST recommendations for code integrity include:

- Code Signing: Implementing code signing practices to ensure that code has not been tampered with during the software build process.

- Checksums and Hash Verification: Verifying the integrity of files using checksums and cryptographic hash functions to detect any unauthorized changes.

NIST automated testing tools

The threat landscape is dynamic, requiring continuous monitoring throughout the SDLC. Automated tools and processes can detect vulnerabilities and security issues as the code evolves.

Automation is key to efficiently integrating security measures. NIST automated testing tools can scan code for vulnerabilities, identify insecure dependencies, and assess overall code quality, contributing to a proactive security stance.

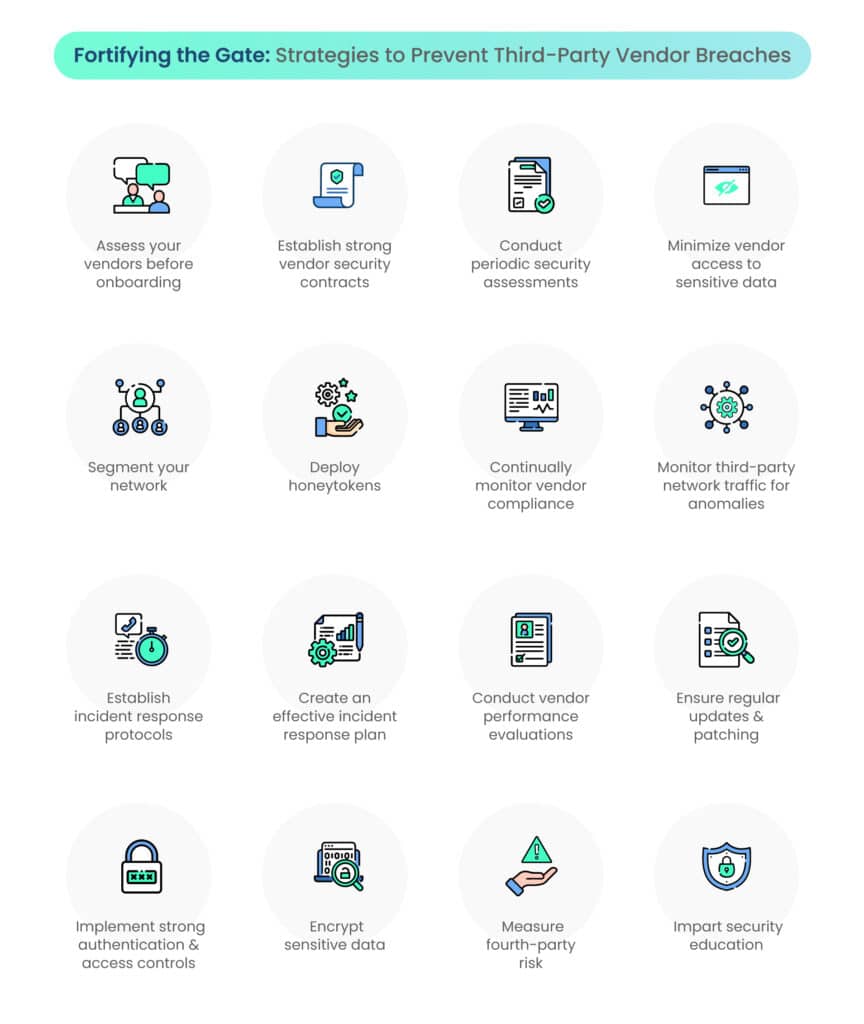

C. NIST guidelines for supplier and vendor management

In an interconnected digital landscape, organizations often rely on external suppliers and vendors for various components of their software. Establishing secure relationships with these entities is crucial to ensuring the integrity and security of the software supply chain.

NIST guidelines for supplier management include conducting:

- Risk assessments: Conducting thorough risk assessments of suppliers, evaluating their security practices, and ensuring they align with industry standards and regulations.

- Security audits: Periodically auditing suppliers to verify compliance with security requirements and to identify any potential risks or vulnerabilities.

NIST guidelines for vendor management highlight the importance of vendor risk assessments as a proactive measure to evaluate the security posture of third-party suppliers. This involves the use of:

- Security questionnaires: Employing detailed security questionnaires to assess a vendor’s security practices, including their approach to software supply chain security.

- Third-party security audits: Engaging third-party auditors to independently assess the security practices of critical suppliers.

Contractual agreements play a pivotal role in establishing expectations for security. NIST recommends including specific clauses related to software supply chain security in contracts. They touch upon these aspects:

Security requirements: Clearly defining security requirements that suppliers must adhere to, including secure coding practices, update mechanisms, and incident response procedures.

Notification protocols: Outlining notification procedures in the event of a security incident within the supply chain, promoting transparency and timely response.

D. NIST’s incident response recommendations

Recovering from a supply chain compromise is not a one-time effort but an ongoing process. NIST recommends a continuous approach to recovery that involves not only restoring affected systems but also enhancing overall security posture.

- Incident response plans: Organizations should develop robust incident response plans that specifically address supply chain incidents. These plans should outline roles and responsibilities, communication strategies, and the steps to be taken during each phase of an incident.

- Tabletop exercises: Regularly conducting tabletop exercises ensures that the incident response team is well-prepared and familiar with their roles. These simulated scenarios help identify strengths and weaknesses in the response plan and facilitate continuous improvement.

- Early detection and rapid response: Early detection of supply chain attacks is crucial for minimizing the impact and preventing the spread of compromises. Automated monitoring tools that analyze network traffic, system logs, and behavioral anomalies play a vital role in identifying suspicious activities.

- Isolation and containment: In the event of a detected compromise, the immediate isolation and containment of affected components are critical. This prevents further spread and limits the potential damage.

- Forensic analysis: Conducting a thorough forensic analysis is essential to understanding the scope of the compromise. This involves examining logs, system states, and other artifacts to identify the source of the attack and the extent of the impact.

- Rebuilding trust: Rebuilding trust in the software supply chain is paramount. Transparent communication with stakeholders, customers, and partners is crucial to keeping them informed about the incident, the steps taken for recovery, and the measures implemented to prevent future occurrences.

- Improving security posture: Organizations should leverage lessons learned from incidents to enhance their overall security posture. This includes implementing additional security measures, conducting thorough reviews of existing security protocols, and investing in technologies that can better safeguard the software supply chain.

NIST recommendations for end users

End users play a crucial role in the overall security of the software supply chain. Ensuring that end users adopt secure installation and updating practices is fundamental to preventing malicious actors from exploiting vulnerabilities.

Here are NIST recommendations for end users:

- Verify software sources: End users should only download and install software from reputable sources. Verifying the authenticity of the source helps prevent the inadvertent installation of compromised or tampered software. They may check the code signing certificates of the software.

- Keep software updated: Regularly updating software ensures that users benefit from the latest security patches and fixes. This practice is especially important for applications that have access to sensitive data or network resources.

NIST guidelines for user education

End users who are educated about potential threats and best security practices are more resilient against social engineering attacks and less likely to fall victim to malicious activities.

- Implement training programs: Organizations should implement training programs to educate end users about the risks associated with software supply chain attacks, emphasizing the importance of vigilance during software installation and updates.

- Impart phishing awareness: Users should be trained to recognize phishing attempts, as these often serve as entry points for supply chain attacks. Simulated phishing exercises can be valuable in raising awareness and improving user responses.

NIST’s guidance on security policies

Organizations should establish and enforce security policies that guide end users in adopting secure behaviors. These policies should be communicated clearly and include guidelines for software usage and updating procedures. Organizations should ensure they incorporate the following:

- Implement access controls: Implement access controls to restrict user permissions, reducing the likelihood of unauthorized software installations or modifications.

- Conduct regular audits: Conduct regular audits to ensure compliance with security policies. This includes reviewing user activities, permissions, and adherence to software installation and updating procedures.

| NIST’s framework for supply chain risk management is designed to be adaptive. It provides a foundation that organizations can build upon to address emerging threats and challenges in the software supply chain. NIST provides guidance on incorporating AI and ML into cybersecurity practices. These technologies offer the potential to enhance threat detection, anomaly identification, and automated response, contributing to a more robust defense against sophisticated attacks.Organizations can leverage NIST’s recommendations to ensure the responsible and secure deployment of these technologies within the context of software supply chain security. NIST acknowledges the importance of Zero Trust Architecture in modern cybersecurity. This approach challenges the traditional notion of trusting entities within the network and instead adopts a model of continuous verification, reducing the attack surface and mitigating the impact of potential breaches. Blockchain, known for its decentralized and tamper-resistant nature, holds promise for fortifying the software supply chain.NIST has initiated research and provided guidance on its applications in various domains, including cybersecurity, offering valuable insights for organizations exploring its integration. |

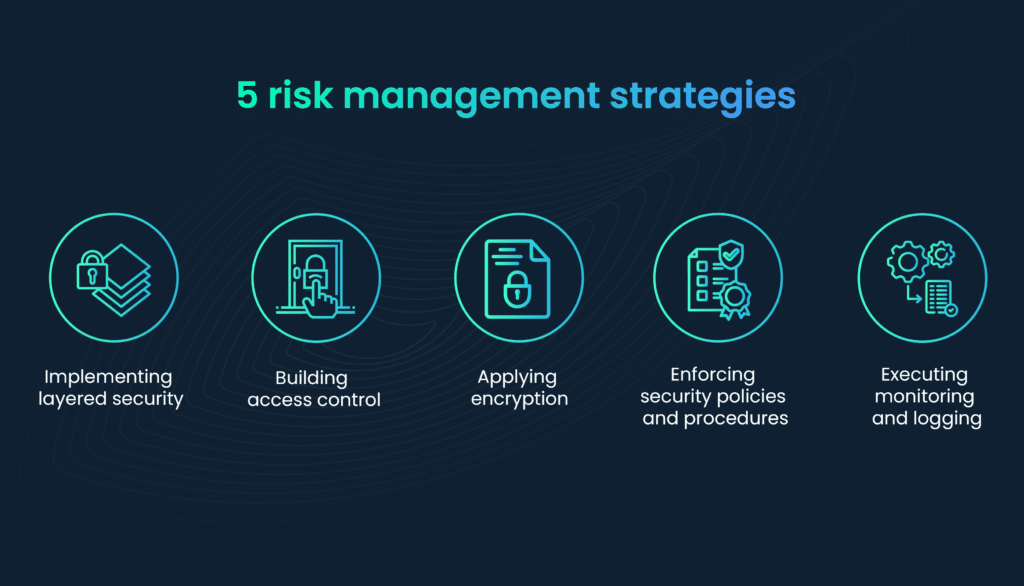

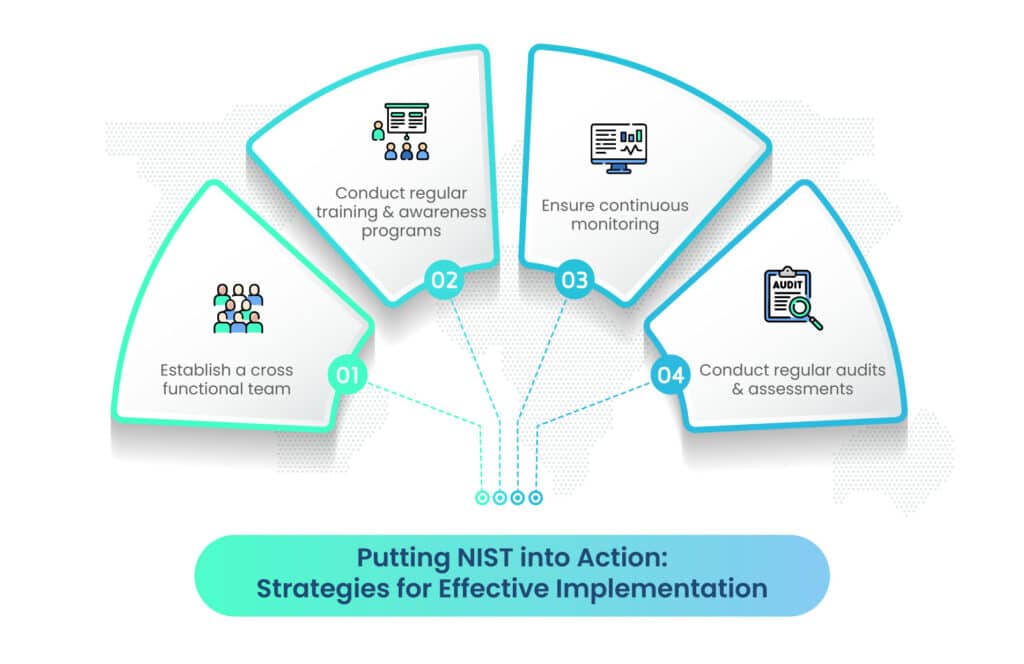

Strategies to implement NIST recommendations

Organizations can follow these practical strategies to effectively implement NIST recommendations:

- Establish a cross-functional team: Form a dedicated team comprising cybersecurity experts, developers, and supply chain professionals to collaborate on implementing NIST guidelines.

- Conduct regular training and awareness programs: Conduct regular training programs to educate employees about the evolving threat landscape and the importance of adhering to security protocols.

- Ensure continuous monitoring: Implement automated monitoring tools for continuous surveillance of the software supply chain, enabling early detection of potential threats.

- Conduct regular audits and assessments: Conduct regular audits to assess compliance with security policies, evaluate the effectiveness of risk mitigation strategies, and identify areas for improvement.

Looking ahead: Adapting to future challenges

As technology evolves, so do the challenges in cybersecurity. Organizations must remain vigilant and adaptable, anticipating future threats and challenges in the software supply chain.

NIST continues to play a vital role in the evolution of cybersecurity standards and guidelines. Organizations can rely on NIST to provide updated recommendations that address emerging threats and incorporate innovative solutions.

To stay ahead of adversaries, organizations should embrace innovative solutions and technologies. This includes exploring emerging trends such as AI, ML, Zero Trust Architecture, and blockchain.

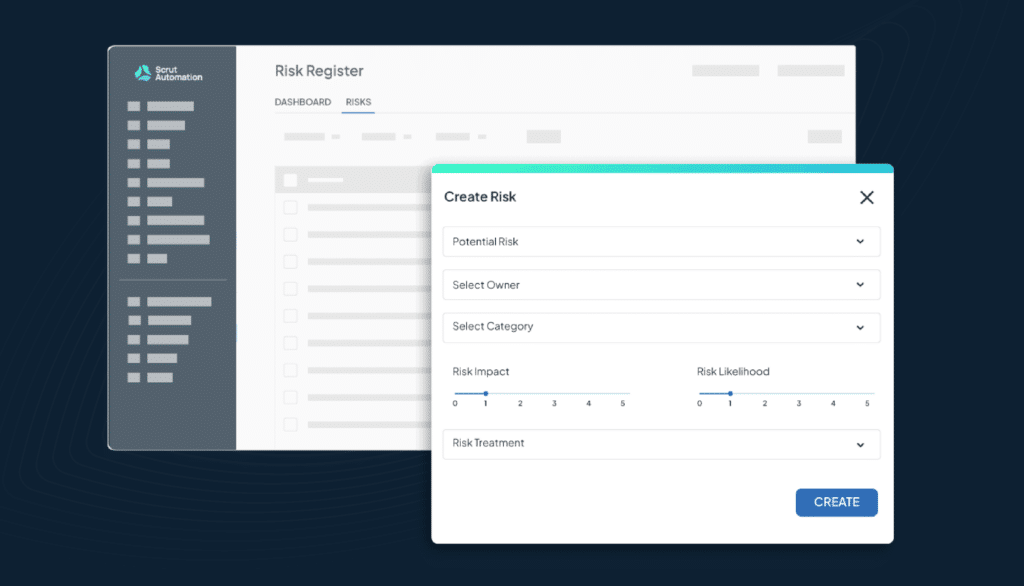

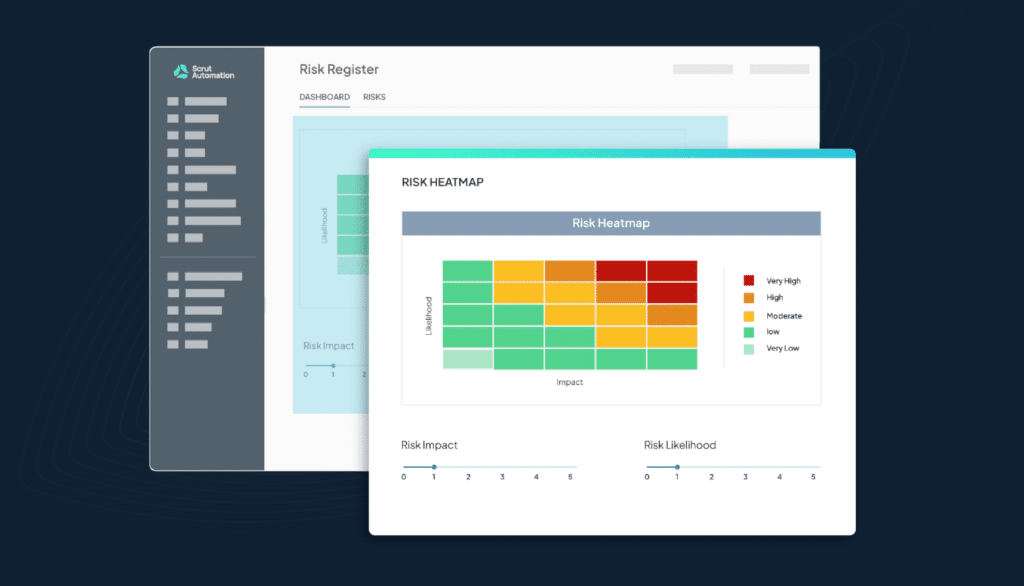

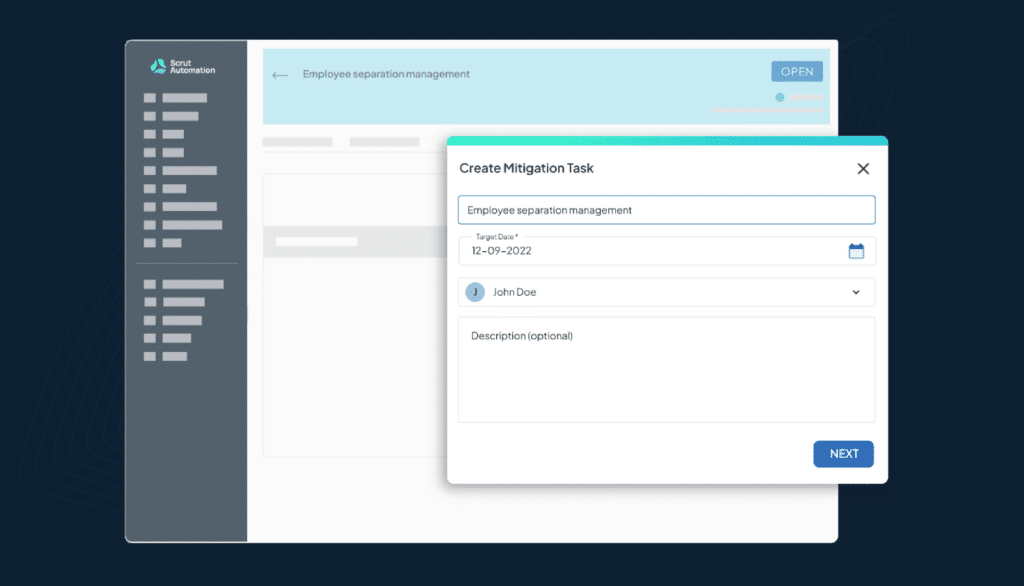

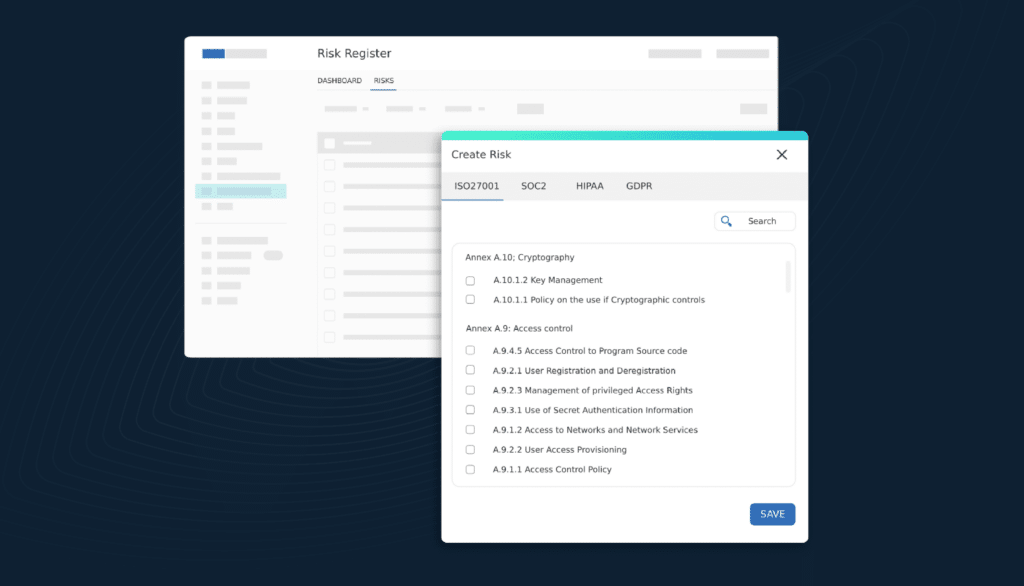

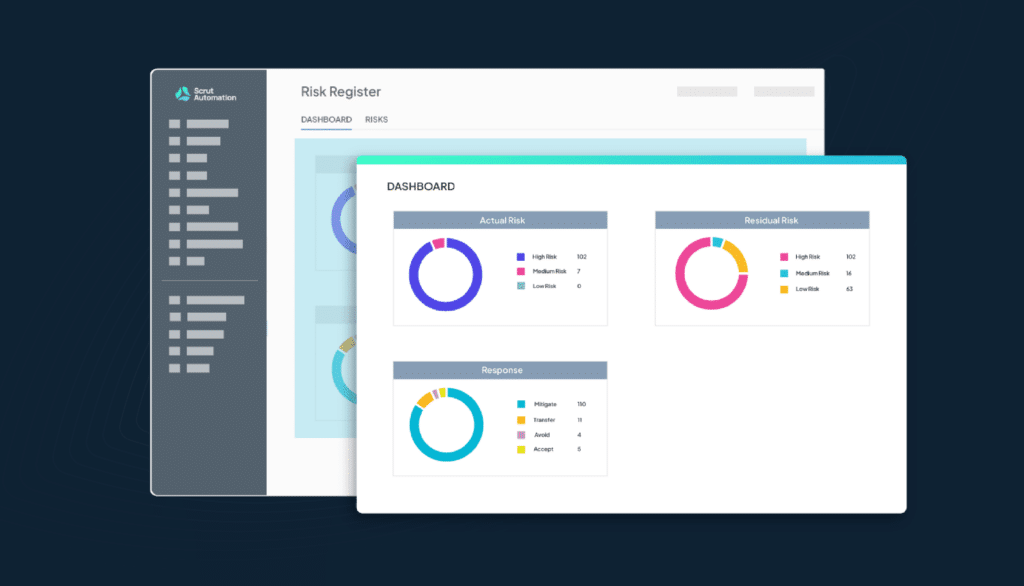

Scrut is a risk-first GRC software solution that assists you with NIST SP 800-171 and NIST 800-53 framework controls. With the tool, you can manage various compliance audits and risks and keep an eye on your controls for 24×7 compliance. To learn more, schedule a demo with us today!

Frequently Asked Questions

The primary objectives of NIST recommendations are to enhance the security and resilience of software supply chains. This includes risk assessment, secure integration of security into the SDLC, robust vendor and supplier management, and effective incident response and recovery strategies.

NIST provides an adaptive framework that allows organizations to stay ahead of emerging threats. The framework is designed to be flexible and responsive, enabling the incorporation of updated guidelines and practices to address the dynamic nature of the cybersecurity landscape.

NIST emphasizes thorough risk assessments of vendors, including evaluating their security practices and ensuring alignment with industry standards. This involves using security questionnaires, conducting third-party security audits, and incorporating security requirements into contractual agreements.

NIST recommends integrating security practices into every phase of the SDLC. This includes defining security requirements in the requirements analysis phase, implementing secure design principles, following secure coding practices, incorporating security testing, and ensuring secure deployment practices.

Continuous monitoring is a crucial aspect of the NIST framework, helping organizations detect and respond to potential threats in real time. It involves using automated tools, threat intelligence feeds, and ongoing collaboration with vendors to maintain a proactive and adaptive risk management strategy.