From the launch of IBM’s AI business tool Watson in 2011 to the dawn of AI giant ChatGPT in 2022—AI has come a long way in a short time.

The disruptive technology makes life a whole lot easier—it helps developers write code, aids in optimizing supply chain management, and even assists medical professionals in analyzing diagnostic data among many other functions.

But it has also complicated things. It’s no surprise that AI can wreak havoc when used for the wrong reasons (hackers using AI for instance), but it can also cause harm inadvertently.

Our dependency on AI has reached such a criitcal level that errors from its end can even cost lives. In one such instance, the Japanese police allowed AI to influence their decision to not provide protective custody to a child who ended up dying due to its miscalculation.

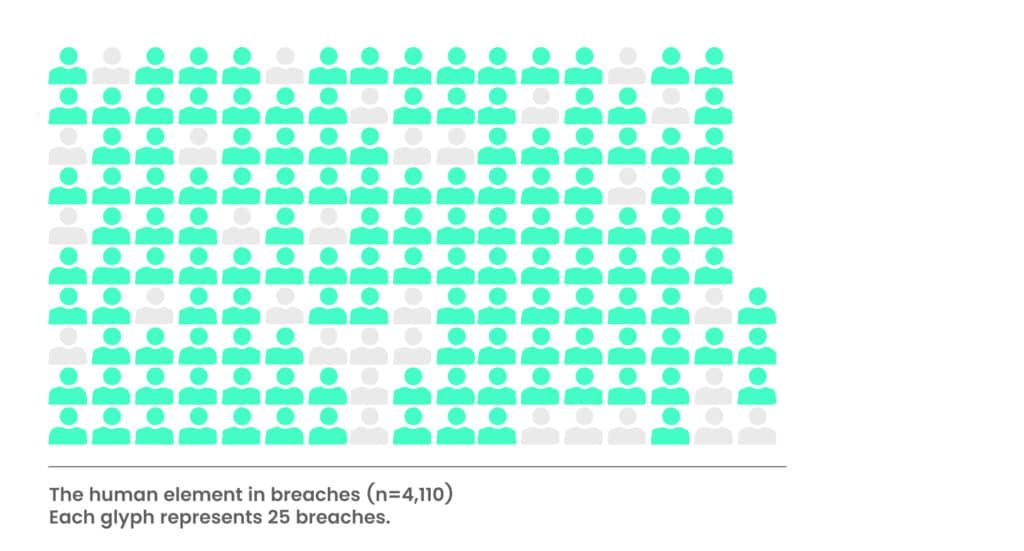

AI has also been known to perpetuate harmful biases such as gender and racial discrimination due to prejudices in its training data. From AI algorithms objectifying women’s bodies to Google’s image recognition misidentifying black people as gorillas, AI is miles away from being reliable and ethical.

These dangers, however, are regarded as minor chinks in AI’s armor—it’s widely accepted that risks come with its territory. But, what is the level of acceptable risk? How can we determine if AI is harnessing data responsibly? Are the mechanisms secured with the correct guardrails? The answer is still unclear!

What is clear is that responsible AI usage is the need of the hour, and we explore the reasons why in this blog.

The Need for AI with Accountability

The allure of AI lies in its ability to make complex decisions, analyze vast amounts of data, and optimize processes with unparalleled efficiency. However, the flip side of this advancement is a lack of understanding and control over how AI systems arrive at their outcomes.

Most organizations today, in every industry, are looking for ways to integrate AI into their applications. The extent of this is hugely seen in the supply chain of various industries as well, however, there is no denying that every potential use-case comes with potentially unforeseen risks.

Navigating the current landscape becomes more challenging when you have to:

- Identify if your third-party vendors employ AI and clarify its purpose

- Ascertain if AI was used in constructing their core applications

- Ensure vendors prioritize security and controls during rapid development

- Confirm the availability of apt talent and processes for robust AI control maintenance

AI models have become black boxes, often making choices that even their creators cannot fully explain. This lack of opacity has led to instances of discrimination, erroneous arrests, and even fatal consequences. It’s clear that without adequate transparency, AI can inadvertently perpetuate societal biases and contribute to undesirable outcomes.

The lack of clarity in comprehending data and models makes it difficult for security leaders to predict and mitigate potential issues.

Let’s take a deeper look at some of the key concerns for security leaders when it comes to navigating the AI threat landscape.

Challenges within the AI Landscape

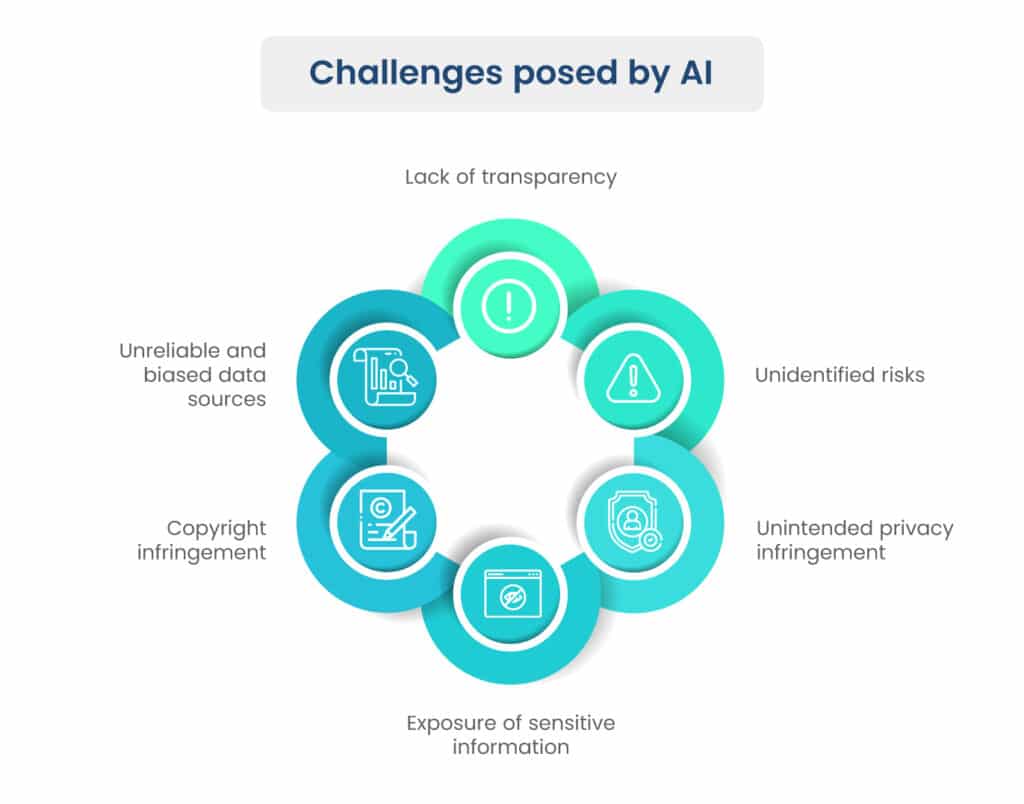

Diving deeper into the complexities of AI, we encounter a realm of challenges rooted in data intricacies and model biases. These challenges are particularly pronounced when we examine AI’s data sources:

1. Unintended Privacy Infringement

The very nature of AI, in its quest to learn and predict, can inadvertently lead to the incorporation of private data like personally identifiable information (PI) or protected health information (PHI). This unintentional input poses grave privacy concerns and ethical dilemmas, highlighting the need for robust data filtering mechanisms.

2. Exposure of Sensitive Business Intelligence and Intellectual Property

AI systems, as they sift through diverse data streams, might unintentionally expose sensitive business insights or intellectual property. The automated nature of AI, while efficient, can inadvertently divulge strategic information, potentially jeopardizing competitive advantages.

3. Legal Complexities of Copyrighted Information

AI’s ability to ingest and generate content raises pertinent questions about copyright infringement. The use of copyrighted material from public sources can inadvertently lead to legal entanglements if not carefully managed, necessitating a thorough understanding of intellectual property laws in the AI context.

4. Dependence on Unreliable and Biased Data Sources

AI’s effectiveness hinges on the quality of its training data. Relying on unreliable or biased sources can lead to skewed outcomes. For instance, if AI is trained on historical data rife with gender biases, it might perpetuate these biases when making decisions, such as in hiring processes. Recognizing and rectifying these biases requires meticulous curation and continuous monitoring of training data.

Unidentified risks could lead to reputational damage, perpetuation of biases, or security vulnerabilities that threaten an organization’s integrity. And more importantly, the ever-evolving nature of AI systems means that new risks can emerge unexpectedly.

So, what’s the right approach to addressing these AI challenges?

Introducing ResponsibleAI: Your Path to Secure and Ethical AI

In the face of these challenges, Scrut proudly presents “ResponsibleAI,” a groundbreaking framework designed to empower companies with the tools and knowledge needed to navigate the complex world of AI responsibly.

The ResponsibleAI framework has been carefully crafted to meet the unique needs and requirements of modern AI-driven organizations.

Benefits of using ResponsibleAI

Scrut’s custom framework integrates top-tier industry guidelines, including NIST AI RMF and EU AI Act 2023, setting a new standard for excellence.

Here are some of the benefits of using ResponsibleAI.

1. Responsible Data and Systems Usage

ResponsibleAI ensures that your AI-powered systems adhere to legal and ethical boundaries. It guarantees that the data used for training AI models is collected within the boundaries of the law and privacy regulations. This crucial step ensures that your organization isn’t inadvertently violating data protection rules.

2. Ethical and Legal Compliance

With ResponsibleAI, you can rest assured that your AI-powered systems will not be used to break any laws or regulations. The framework restricts the usage of AI to prevent privacy invasions, harm to individuals, or any other unethical activities.

3. Risk Identification and Mitigation

One of the standout features of ResponsibleAI is its ability to identify and assess risks associated with your AI implementations. The framework provides clear visibility into potential risks based on your organization’s context, allowing you to prioritize and mitigate them effectively.

4. Out-of-the-Box Controls

Navigating the uncharted waters of AI risks can be overwhelming for security leaders. ResponsibleAI simplifies this process by offering pre-defined controls that encompass various aspects of AI governance, training, privacy, secure development, technology protection, and more. This means you can implement the right controls from day one.

5. Building Customer Trust

In an age where skepticism about AI’s ethical use abounds, adhering to industry best practices in AI risk management becomes a beacon of trust for your consumers. ResponsibleAI paves the way for building a trustworthy brand image, ensuring that your customers feel safe interacting with your AI-powered products.

6. Avoidance of Fines and Penalties

While there might not be specific AI-related penalty definitions, improper AI use can still result in severe penalties due to detrimental outcomes. ResponsibleAI shields you from such legal pitfalls, helping you steer clear of financial and reputational damage.

7. Cost Savings Through Early Risk Identification

As the saying goes, prevention is better than cure. ResponsibleAI’s early risk identification capabilities can save your organization significant costs by nipping potential issues in the bud. This proactive approach prevents the need for costly and time-consuming system changes down the line.

Reimagine AI Responsibly with Scrut

In the fast-paced world of AI innovation, the ResponsibleAI framework emerges as a beacon of hope and guidance for organizations seeking to harness AI’s potential while mitigating its inherent risks. This framework is not just a tool; it’s a philosophy that transforms AI from a potential liability into a trusted ally.

To CEOs, CISOs, and GRC leaders from small and medium-sized businesses, ResponsibleAI offers a lifeline in the complex landscape of AI governance. It’s your blueprint for cultivating ethical AI practices that not only protect your organization but also build bridges of trust with your customers.

As we unveil ResponsibleAI to the world, we invite you to join us in this transformative journey. Embrace ResponsibleAI and pave the way for a future where innovation and ethics go hand in hand. Together, let’s redefine what AI can achieve—for the benefit of your organization, your customers, and society at large.